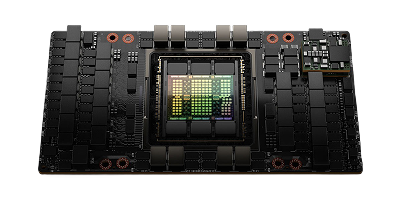

NVIDIA HGX B300

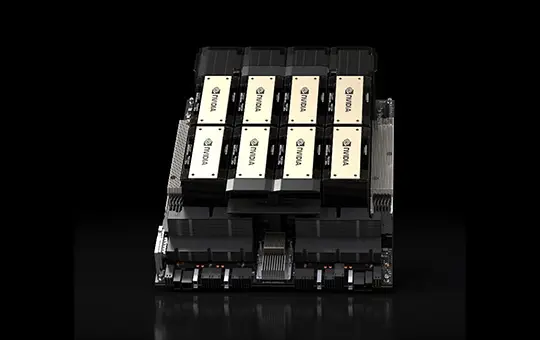

Eight Blackwell Ultra GPUs with up to 288 GB HBM3e per GPU. 50% more memory than B200 and over 100 PFLOPS dense FP4 per node. Built for AI reasoning at scale. Designed, deployed, and managed by CUDO.

NVIDIA HGX B300

Infrastructure and technology partners

Perfect for a range of workloads

Over 250 tokens per second per user on DeepSeek R1-671B from a single 8-GPU node. Up to 2.3 TB of HBM3e per node keeps entire models in memory for low-latency inference on dedicated B300 clusters.

Up to 1.5X faster training than B200 with FP4 precision. Up to 2.3 TB HBM3e per node for trillion-parameter models and large MoE architectures with fewer memory bottlenecks.

Full model weights held in memory for agentic workflows and long-context reasoning. No offloading, no re-computation. Enough memory per GPU to support models with 128K+ token context windows.

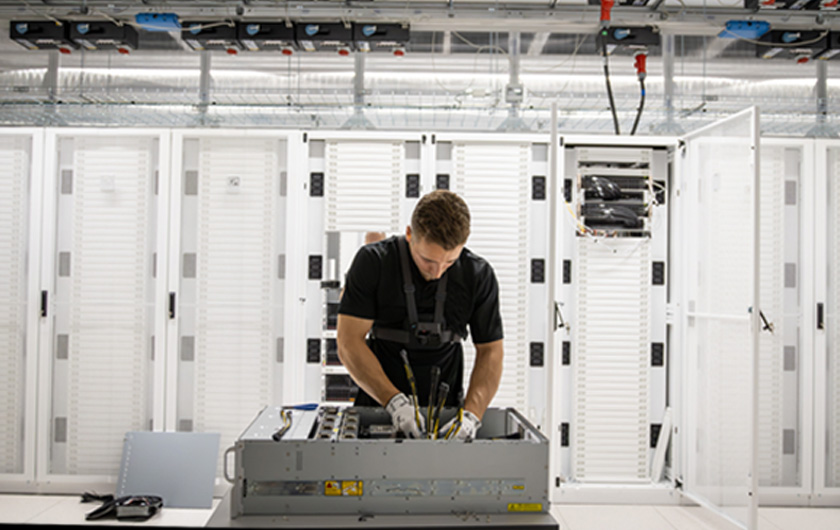

Dedicated Blackwell Ultra clusters, designed and managed by CUDO

Deployed across 16 ISO-certified data centres

Scale to 1,000+ GPUs across dedicated multi-node clusters

High-speed networking with NVIDIA InfiniBand or Spectrum-X Ethernet. Verify per-GPU bandwidth with engineering

Expert rack-level design, installation, and benchmarking before handoff

Compatible with Slurm, Kubernetes, and NVIDIA Base Command

24/7 monitoring, management, and engineering support

Available at the most cost-effective pricing

Launch your AI products faster with on-demand GPUs and a global network of data center partners

Bare metal

Powered by renewable energy

No noisy neighbors

SpectrumX local networking

300Gbps external connectivity

NVMe SSD storage

Enterprise

Powerful GPU clusters

Scalable data center colocation

Large quantities of GPUs and hardware

Optimize to your requirements

Expert installation

Scale as your demand grows

Specifications

NVIDIA HGX B300 specifications

Starting from

Contact us for pricing

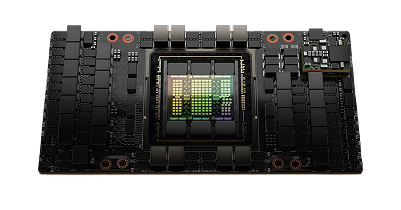

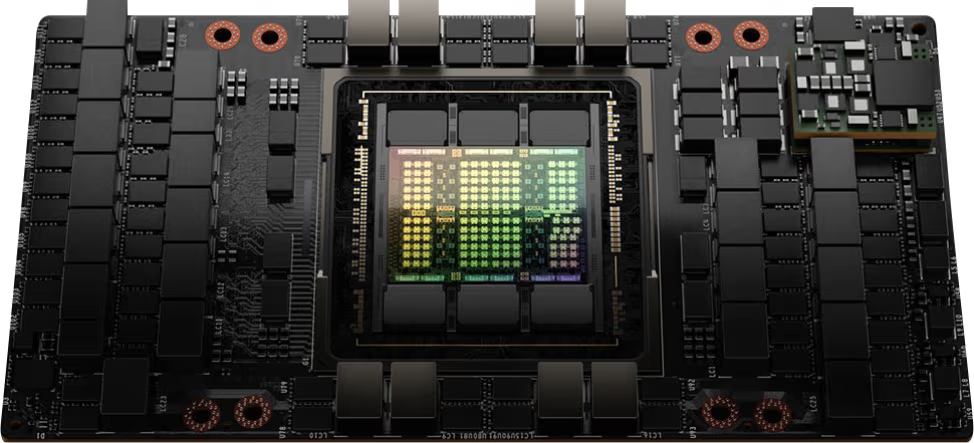

Architecture

NVIDIA Blackwell

GPU

8x NVIDIA Blackwell Ultra SXM

GPU memory

2.1 TB total, 62 TB/s HBM3e bandwidth

FP4 tensor core performance

144 PFLOPS | 108 PFLOPS

FP8 tensor core performance

72 PFLOPS

NVIDIA NVSwitch

2x

NVIDIA NVLink bandwidth

14.4 TB/s aggregate bandwidth

System power usage

~14.5 kW max

CPU

Intel Xeon 6776P processors

System memory

2 TB, configurable to 4 TB

Networking

8x OSFP ports serving 8x NVIDIA ConnectX-8 VPI. Up to 800 Gb/s of NVIDIA InfiniBand/Ethernet 2x dual-port QSFP112 NVIDIA BlueField®-3 DPU. Up to 400 Gb/s of NVIDIA InfiniBand/Ethernet

Management network

1GbE onboard network interface card (NIC) with RJ45 1GbE RJ45 host baseboard management controller (BMC)

Storage

OS: 2x 1.9 TB NVMe M.2, internal storage: 8x 3.84 TB NVMe U.2

Software

NVIDIA AI Enterprise (optimized AI software), NVIDIA Mission Control (AI data center operations and orchestration with NVIDIA Run:ai technology), NVIDIA DGX OS (operating system), supports Red Hat Enterprise Linux / Rocky / Ubuntu

Rack units (RU)

10

Operating temperature

10-35°C / 50-90°F

Where B300 clusters deliver the biggest impact

Explore uses cases for the NVIDIA B300 including Frontier model training, AI reasoning, large-scale inference, Sovereign and regulated AI.

AI reasoning and test-time scaling

Up to 288 GB HBM3e holds full model states in memory while Blackwell Ultra's enhanced Transformer Engine doubles attention throughput. The result is that reasoning models process longer chains of thought without offloading, maintaining output quality at significantly higher speed. An 8-GPU Blackwell node delivers over 250 tokens per second per user on DeepSeek R1-671B. All CUDO clusters are benchmarked and validated before handoff.

Large-scale inference at lower cost per token

Up to 5X higher throughput per megawatt compared to Hopper. More memory per GPU means fewer nodes to serve the same model. Lower infrastructure cost and less operational complexity.

Frontier model training and fine-tuning

Up to 1.5X faster training than B200 with FP4 precision across multi-node B300 clusters. Up to 2.3 TB of HBM3e per 8-GPU node holds larger model states in memory, reducing the need for complex model parallelism and the communication overhead it creates.

Sovereign and regulated AI

Deploy B300 clusters in ISO-certified data centres globally. Meet data residency and regulatory requirements with dedicated infrastructure under your control. Your hardware, your jurisdiction, managed by CUDO.

Blog

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Browse alternative GPU solutions for your workloads

Access a wide range of performant NVIDIA and AMD GPUs to accelerate your AI, ML & HPC workloads

NVIDIA H100 PCIe

Price on request

Scale with high performance H100 GPUs on our reserved cloud.