NVIDIA Blackwell GPUs

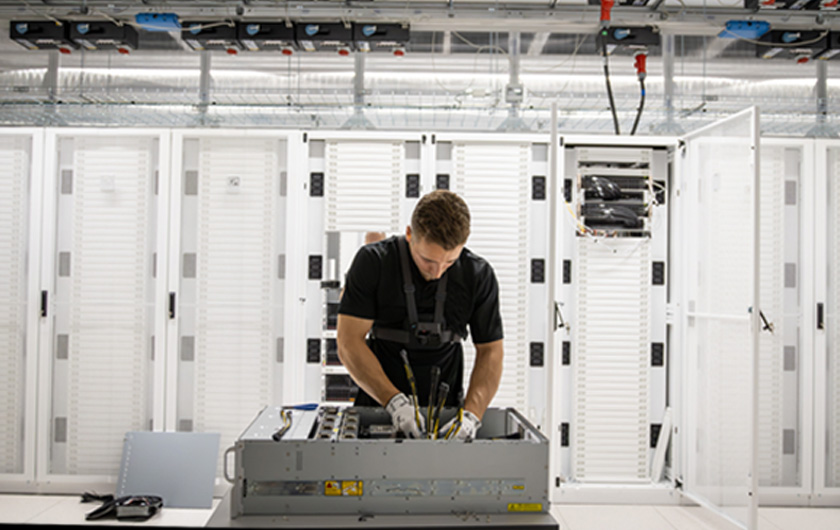

Up to 4X faster training and 30X faster inference than Hopper. CUDO deploys managed Blackwell clusters in two form factors, both liquid-cooled. 8-GPU HGX nodes and 72-GPU NVL72 racks. Deployed, managed, and supported for your workload.

NVIDIA Blackwell GPUs

Infrastructure and technology partners

Choose the right Blackwell configuration

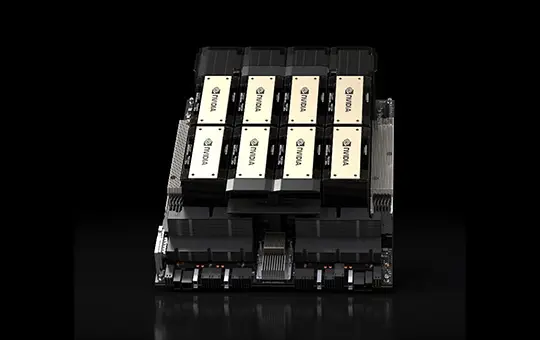

NVIDIA HGX B200

Blackwell. 8 GPUs per node.

Up to 72 PFLOPs dense FP4 per 8-GPU node with 192 GB HBM3e per GPU. Up to 4X faster training and 30X faster inference than Hopper. The most widely deployed Blackwell configuration. Shipping now.

NVIDIA HGX B300

Blackwell Ultra. 8 GPUs per node.

Over 100 PFLOPS dense FP4 per 8- GPU node with up to 288 GB HBM3e per GPU. 2X attention throughput and 50% more memory than B200. Built for AI reasoning.

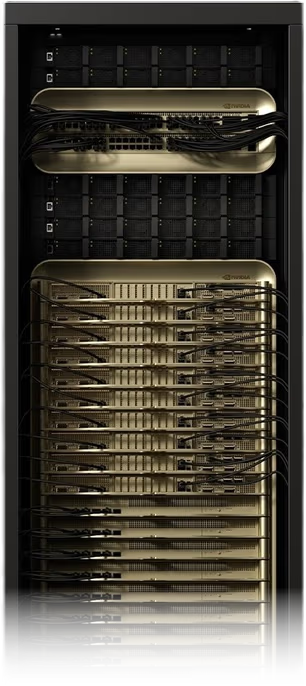

NVIDIA GB200 NVL72

Blackwell. 72 GPUs per rack, liquid-cooled

72 Blackwell GPUs and 36 Grace CPUs in a single NVlink domain. The full rack operates as one massive GPU with up to 14 TB HBM3e. Available now.

NVIDIA GB300 NVL72

Blackwell Ultra. 72 GPUs per rack, .

Over 1000 PFLOPS dense FP4 and 20 TB HBM3e in a single 72-GPU liquid-cooled rack. Up to 10X lower inference latency and up to 5X higher throughput per megawatt than Hopper.

Already running H100 or H200?

Blackwell delivers a generational leap over Hopper in raw throughput, cost per token, and energy efficiency. A single Blackwell Ultra GPU can hold a 70B-parameter model in FP16 without quantisation, something that required two or more H100s. NVL72 racks put 72 GPUs in a single NVLink domain, eliminating the InfiniBand bottleneck that limits Hopper multi-node scaling.

CUDO manages the full transition. Workload assessment, cluster design, deployment, and migration support. Your existing CUDA code runs on Blackwell without rewriting.

Which Blackwell system is right for you?

Workload

For inference and reasoning at node scale, start with HGX B300. For rack-scale inference or large-scale training, GB200 NVL72 (available now) or GB300 NVL72 (reservation) give you 72-GPU NVLink domains that eliminate intra-rack communication overhead. HGX B200 is the proven, cost-effective choice for training and fine-tuning at scale.

Availability & Sovereign

HGX B200 and GB200 NVL72 are available now. HGX B300 and GB300 NVL72 are available for reservation. All four configurations are deployed in ISO-certified data centres across North America, Europe, the UK, and MENA with Blackwell's hardware-level confidential computing.

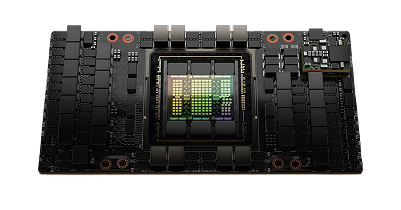

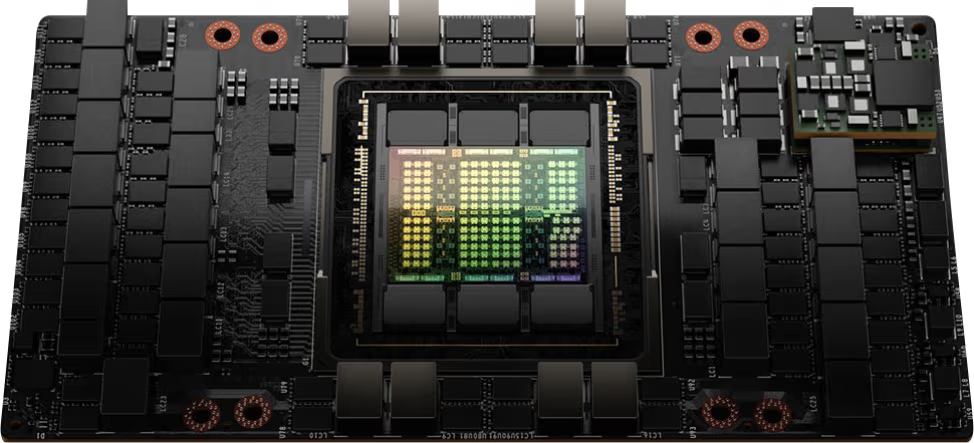

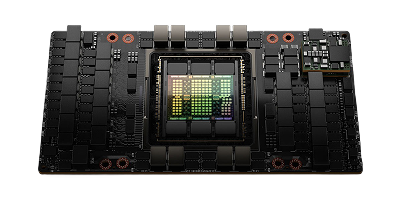

Inside the Blackwell architecture

Blackwell is NVIDIA’s data centre GPU architecture for frontier AI. It succeeds Hopper (H100, H200) with a fundamentally different approach to compute density, memory, and interconnect.

Compute

208 billion transistors across two reticle-limit dies, connected by a 10 TB/s chip-to-chip link and operating as a single unified GPU. Fifth-generation Tensors Cores support FP4, FP6, and FP8 precision formats. On Blackwell Ultra systems, attention-layer throughput is doubled for faster AI reasoning.

Interconnect

Fifth-generation NVLink delivers 1.8 TB/s bidirectional bandwidth per GPU. Within HGX nodes, 8 GPUs share a single NVLink domain. Within NVL72 racks, 72 GPUs communicate over a 130 TB/s NVLink fabric, forming the foundation for rack-scale AI. Scale-out networking uses NVIDIA Quantum-X800 InfiniBand or Spectrum-X Ethernet.

Reliability & security

A dedicated RAS engine uses AI-driven diagnostics to predict and prevent hardware faults. Blackwell's confidential computing with TEE-I/O support protects AI models and data at the hardware level without compromising performance. It is the first GPU architecture to offer this.

Blackwell at a glance

See individual product pages for full specifications.

Starting from

Contact us for pricing

Architecture

NVIDIA Blackwell

GPU

8x NVIDIA Blackwell GPUs

GPU memory

1,440 GB total, 64 TB/s HBM3e bandwidth

FP4 tensor core performance

144 PFLOPS (sparse) / 72 PFLOPS (dense)

FP8 tensor core performance

72 PFLOPS (sparse) / 36 PFLOPS (dense)

NVIDIA NVSwitch

2x

NVIDIA NVLink bandwidth

14.4 TB/s aggregate bandwidth

System power usage

~14.3 kW max

CPU

2x Intel Xeon Platinum 8570 processors, 112 cores total, 2.1 GHz (base), 4 GHz (max boost)

System memory

2 TB, configurable to 4 TB

Networking

Networking4x OSFP ports serving 8x single-port NVIDIA ConnectX-7 VPI (up to 400 Gb/s NVIDIA InfiniBand/Ethernet), 2x dual-port QSFP112 NVIDIA BlueField-3 DPU (up to 400 Gb/s NVIDIA InfiniBand/Ethernet)

Management network

10 Gb/s onboard NIC with RJ45, 100 Gb/s dual-port ethernet NIC, host baseboard management controller (BMC) with RJ45

Storage

OS: 2x 1.9 TB NVMe M.2, internal storage: 8x 3.84 TB NVMe U.2

Software

NVIDIA AI Enterprise (optimized AI software), NVIDIA Mission Control (AI data center operations and orchestration with NVIDIA Run:ai technology), NVIDIA DGX OS (operating system), supports Red Hat Enterprise Linux / Rocky / Ubuntu

Rack units (RU)

10

Operating temperature

10-35°C / 50-90°F

Why deploy Blackwell with CUDO

Dedicated bare-metal clusters. Every GPU is physically yours. Not a shared instance, not a spot allocation, not a virtual partition. No contention, no throttling, no noisy neighbours.

Operational from day one. CUDO handles site preparation, rack deployment, cooling infrastructure, networking, OS and driver provisioning, and 24/7 monitoring. Average time from contract to live cluster. Ask sales for current lead times.

Data residency across four regions. ISO-certified data centres in North America, Europe, the UK, and MENA. Deploy where your data needs to stay.

NVIDIA Preferred Partner. Direct access to Blackwell and Blackwell Ultra hardware, including during periods of constrained supply.

Blog

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Browse alternative GPU solutions for your workloads

Access a wide range of performant NVIDIA and AMD GPUs to accelerate your AI, ML & HPC workloads

NVIDIA H100 PCIe

Price on request

Scale with high performance H100 GPUs on our reserved cloud.