Land. Power. Compute. Delivered for enterprise AI

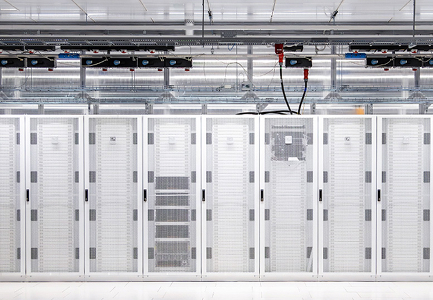

CUDO Compute designs, commissions and operates production AI infrastructure. We secure land, provision power and deploy NVIDIA GPU clusters to deliver environments engineered for large scale AI training and inference workloads.

Infrastructure and technology partners

How we deliver

We focus on activation as well as allocation, ensuring GPU capacity is deployed, performant and ready for production.

Design

NVIDIA reference-aligned architectures validated for training and inference. Cluster design covering InfiniBand fabrics, high-performance storage (VAST, Weka, DDN), power, cooling and rack layout.

Deploy

Secured NVIDIA systems through established OEM channels. Power-ready European sites with confirmed timelines. Hardware delivery through Dell, Lenovo, Supermicro and HPE partnerships.

Run

Foundational SRE as standard, not an add-on. 24/7 monitoring, incident response, firmware management and NVIDIA escalation paths. Clusters enter production in a stable, reference-aligned state.

Industry perspective

Stefan Nilsson, COO, Conapto

“In the Nordics, we place great importance on operational excellence, customer experience and transparent partnerships built on trust. CUDO reflects these values in the way they design and deploy high density GPU environments, making them a natural partner for demanding AI and high performance computing projects.”

Deployment proof

An AI infrastructure platform operator engaged CUDO to remediate, commission and operate NVIDIA H100 and H200 GPU infrastructure across data centers in North America, Europe and the Middle East.

Programme requirements

An AI infrastructure platform operator required production GPU capacity to support AI training and inference workloads

The customer had identified data center infrastructure resources across North America, Europe and the Middle East that required substantial remediation before GPU deployment

A consistent deployment and operating model required across sites

Internal engineering teams required to remain focused on AI platform and customer workloads rather than infrastructure remediation and cluster operations

What we delivered

Deployment of NVIDIA H100 and H200 GPU clusters across data centers in North America, Europe and the Middle East

Remediation and commissioning of existing infrastructure environments to support production GPU clusters

CUDO designed and deployed the network architecture, storage infrastructure, management layer and node automation

Automated cluster deployment using golden images, health checks and benchmarking frameworks

Cluster environments commissioned and accepted into production within approximately two months

Operational outcomes

Production GPU infrastructure supporting AI training and inference workloads for multiple internal teams and external partners

Monitoring, hardware lifecycle support and operational consultancy delivered with CUDO and partner infrastructure teams

A standardized deployment and operating model in place across all sites

Infrastructure environments operational across North America, Europe and the Middle East

AI infrastructure operated by experienced engineers

Designed, deployed and operated by engineers with over 20 years of data centre experience and a collective track record across partnerships and direct operations of delivering and operating more than 40,000 GPUs globally.

Enterprise SLAs and real time monitoring

Compliance across UK, EU, North America, APAC and Middle East jurisdictions

Operated by NVIDIA certified engineers

24/7 infrastructure monitoring and operational support

The CUDO infrastructure model

CUDO Compute addresses the real world constraints of AI deployment across land, power and compute, delivering reliable GPU infrastructure for enterprise workloads.

Regional deployment

Deploy where your workload needs to run.

- Infrastructure across the UK, EU, North America, APAC and the Middle East

- In-region options for latency, residency and regulatory needs

- ISO 27001 and SOC 2 aligned operational environments

AI clusters

Dedicated GPU clusters for sustained AI workloads.

- High-density environments for training and inference

- Architectures aligned to NVIDIA reference designs

- Designed for multi-node AI deployments at scale

Power & colocation

Power-ready sites for high-density AI infrastructure.

- Sites selected for power, cooling and connectivity

- Deployment planning aligned to future capacity growth

- Colocation options matched to cluster requirements

Managed services

Operational delivery from deployment through production.

- Commissioning and acceptance testing

- 24×7 monitoring and L3 operational support

- Maintenance, patching and lifecycle management

Network & storage

The data plane that keeps GPUs fed.

- InfiniBand and Ethernet cluster design

- Support for VAST, WEKA, DDN and NetApp integrations

- Performance tuning across compute, storage and scheduling

Security & sovereignty

Controlled environments for enterprise and regulated workloads.

- Data residency and access boundary requirements

- Cluster isolation and security policy support

- Support for regulated and compliance-sensitive deployments

Regional deployment

Deploy in the right region for latency, residency and compliance.

Managed services

24x7 support, L3 response and lifecycle operations.

AI clusters

Dedicated GPU clusters for training and inference.

Network & storage

InfiniBand, Ethernet and high-performance storage.

Power & colocation

Power-ready sites for high-density AI infrastructure.

Security & sovereignty

Controlled environments for enterprise and regulated workloads.

Land. Power. Compute.

Operating at global enterprise scale

CUDO Compute operates across ISO 27001-certified facilities in North America, Europe, the UK and MENA, supporting enterprise AI infrastructure at global scale

Security and compliance

ISO 27001 Information Security

ISO 14001 Environmental Management

GDPR-aligned operations

Sovereign data residency enforcement

Capacity pipeline

250MW+ contracted by end 2026

750MW+ targeted by end 2027

Multi-GW pipeline including exclusive European sites

Deploy production-scale enterprise AI

Secure GPU infrastructure aligned to NVIDIA Enterprise Reference Architecture, delivered with the power, engineering and operational support required for production AI deployments.