In the dynamic world of Machine Learning (ML), efficient hardware is crucial to drive performance. Graphics Processing Units (GPUs) are critical for running ML algorithms due to their superior computational capabilities. Unlike traditional Central Processing Units (CPUs) that handle tasks sequentially, GPUs excel at performing multiple operations simultaneously, making them ideal for the parallel processing requirements of ML workloads. This ability to handle high volumes of calculations concurrently significantly accelerates model training times, thereby increasing productivity and enabling faster insights. Furthermore, GPUs come with high-bandwidth memory, which is crucial for handling the large datasets typical in ML applications. Leveraging GPUs can lead to more efficient data processing, quicker turnaround times, and a more streamlined and effective ML implementation.

In this article, we’ll discuss some of the best GPUs for running ML algorithms and the most crucial factors to consider when weighing hardware options for your ML project.

What is Machine Learning?

Machine learning falls under the umbrella of artificial intelligence (AI). It involves teaching computers to learn from experience and improve their performance, much like how humans learn. This is achieved through the use of data and algorithms.

For instance, image recognition is a practical application of machine learning. It involves teaching a computer to identify an object within a digital image based on pixel intensity. An example of this in the real world is labelling an X-ray as cancerous or non-cancerous.

But what's the primary purpose of machine learning? Well, it allows us to feed a computer algorithm a vast amount of data and then have the computer analyse it to make data-driven recommendations and decisions.

It's also worth noting that while AI and machine learning are closely related, they're not the same thing. AI is a broader concept that encompasses the idea of machines mimicking human intelligence. Machine learning, on the other hand, focuses on teaching machines to perform specific tasks and provide accurate results by identifying patterns. ML is a rapidly evolving field, with new techniques and applications being constantly developed. This makes it a critical area of study for businesses looking to leverage the power of AI.

Factors to consider when selecting a GPU for Machine Learning

Here are five essential qualities to consider when choosing a GPU for ML processing:

- Memory Size: ML algorithms require substantial memory to process large datasets. GPUs with higher memory can handle larger batches of data, speeding up the learning process.

- Performance: The GPU's computational power, measured in FLOPS (Floating Point Operations Per Second), directly impacts the speed of ML model training.

- Power Consumption: High-performance GPUs consume significant power. Evaluating the energy efficiency of a GPU is essential, particularly for large-scale operations.

- Budget: GPUs range widely in price. Balancing budget constraints with performance needs is a crucial consideration.

- Software Compatibility: Some ML frameworks are optimised for specific GPUs. Ensuring your chosen GPU is compatible with your preferred software stack is critical.

Related: 7 Security considerations for Cloud storage and processing

Best GPUs for Machine Learning

Taking the above factors into consideration, let’s look at four of the best GPUs for executing ML tasks:

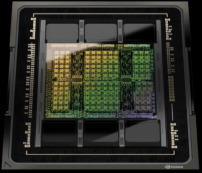

1. NVIDIA Tesla V100

NVIDIA's Tesla V100 is often hailed as the gold standard for ML applications. With 640 Tensor Cores, it provides immense computational power, making it ideal for demanding ML workloads. Furthermore, its 32GB high-bandwidth memory ensures swift data handling, enhancing overall model training speed.

2. NVIDIA Titan RTX

The Titan RTX, another NVIDIA offering, is a high-performing GPU designed with ML developers in mind. It features Turing architecture, which enhances AI capabilities, and its 24GB GDDR6 memory allows for efficient data processing. Although slightly less powerful than the Tesla V100, the Titan RTX offers a more cost-effective solution for businesses on a tighter budget.

3. AMD Radeon VII

Although NVIDIA dominates the ML GPU market, AMD's Radeon VII holds its own with 16GB HBM2 memory and 60 compute units. While it may not match NVIDIA's offerings regarding ML-specific features, its impressive raw performance makes it a viable option for businesses looking to diversify their hardware sources.

4. Google TPU

Google's Tensor Processing Unit (TPU) is custom-built for ML workloads. Its ability to perform high volumes of low-precision computations – a common requirement in ML – sets it apart. However, it's worth noting that TPUs are only available via Google Cloud, making them less accessible for on-premise solutions.

Choosing the right GPU for your ML tasks can significantly enhance your business's AI capabilities. Whether you opt for the high-powered NVIDIA Tesla V100, the value-for-money Titan RTX, the raw performance of AMD's Radeon VII, or the ML-specific Google TPU, your choice should align with your business's unique needs and resources.

At CUDO Compute, we understand the intricacies of ML hardware requirements. Our cloud infrastructure supports a wide range of GPUs, offering scalable, efficient solutions for your business's ML needs. Contact us to find out how we can help optimise your ML workloads.

About CUDO Compute

CUDO Compute is a fairer cloud computing platform for everyone. It provides access to distributed resources by leveraging underutilised computing globally on idle data centre hardware. It allows users to deploy virtual machines on the world’s first democratised cloud platform, finding the optimal resources in the ideal location at the best price.

CUDO Compute aims to democratise the public cloud by delivering a more sustainable economic, environmental, and societal model for computing by empowering businesses and individuals to monetise unused resources.

Our platform allows organisations and developers to deploy, run and scale based on demands without the constraints of centralised cloud environments. As a result, we realise significant availability, proximity and cost benefits for customers by simplifying their access to a broader pool of high-powered computing and distributed resources at the edge.

Continue reading

5 mistakes to avoid in AI infrastructure projects: from inefficient training to poor planning

16 min read

Real-world benchmarks demonstrating performance variances across different GPU cloud infrastructures

11 min read

Choosing a GPU cloud provider in 2025: A proven evaluation checklist

25 min read

How to select the right GPU for your AI workload

18 min read

High-performance cloud GPUs