All posts

CUDO  Resources

Resources

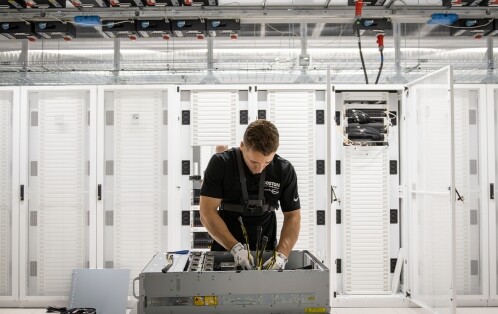

Hardware bottlenecks strand expensive compute. We detail the precise site readiness, cooling, and storage configurations needed to scale AI racks

Resources

Power is no longer a background variable in AI infrastructure. It is a first-order constraint that sets the ceiling on

- Emmanuel Ohiri

Resources

Global data center capacity will nearly triple by 2030, with AI driving most demand. Traditional infrastructure planning no longer works

- Emmanuel Ohiri

Resources

Read the comprehensive comparison between NVIDIA's H100 and H200 GPUs. Discover the expected improvements and performance gains for AI and

- Emmanuel Ohiri

NVIDIA introduced a pivotal breakthrough in AI technology by unveiling its next-gen Blackwell-based GPUs at the NVIDIA GTC 2024.

- Emmanuel Ohiri

Resources

Ensemble learning combines the strengths of different algorithms to achieve greater accuracy and solve complex problems.

- Emmanuel Ohiri

Resources

DeepSeek's release of its R1 AI models is forcing proprietary AI projects to rethink their strategies and could potentially lead

- Emmanuel Ohiri

Resources

Compare top LLMs and AI orchestration tools to build fast, scalable, cost-efficient AI systems tailored to performance and business needs.

- Emmanuel Ohiri

Resources

Learn how to optimise architecture fit, memory bandwidth, and cluster topology to maximise ROI and avoid wasted cloud spend.

- Emmanuel Ohiri

Resources

Real-world benchmarks demonstrating performance variances across different GPU cloud infrastructures

The H100 reduces training costs, and the L40S beats A100 on inference $/token efficiency—real-world benchmarks reveal where each GPU maximises

- Emmanuel Ohiri

Resources

A 2025 survey found 88.8% of IT leaders believe a single cloud provider shouldn’t control their entire stack. Learn how

- Emmanuel Ohiri

Artificial intelligence

Resources

Hardware bottlenecks strand expensive compute. We detail the precise site readiness, cooling, and storage configurations needed to scale AI racks

- Emmanuel Ohiri

Resources

Power is no longer a background variable in AI infrastructure. It is a first-order constraint that sets the ceiling on

- Emmanuel Ohiri

Resources

Global data center capacity will nearly triple by 2030, with AI driving most demand. Traditional infrastructure planning no longer works

- Emmanuel Ohiri

Resources

Read the comprehensive comparison between NVIDIA's H100 and H200 GPUs. Discover the expected improvements and performance gains for AI and

- Emmanuel Ohiri

Resources

NVIDIA introduced a pivotal breakthrough in AI technology by unveiling its next-gen Blackwell-based GPUs at the NVIDIA GTC 2024.

- Emmanuel Ohiri

Resources

Ensemble learning combines the strengths of different algorithms to achieve greater accuracy and solve complex problems.

- Emmanuel Ohiri

High performance computing

Resources

Hardware bottlenecks strand expensive compute. We detail the precise site readiness, cooling, and storage configurations needed to scale AI racks

- Emmanuel Ohiri

Resources

Global data center capacity will nearly triple by 2030, with AI driving most demand. Traditional infrastructure planning no longer works

- Emmanuel Ohiri

Resources

Read the comprehensive comparison between NVIDIA's H100 and H200 GPUs. Discover the expected improvements and performance gains for AI and

- Emmanuel Ohiri

Resources

NVIDIA introduced a pivotal breakthrough in AI technology by unveiling its next-gen Blackwell-based GPUs at the NVIDIA GTC 2024.

- Emmanuel Ohiri

Resources

Learn how to optimise architecture fit, memory bandwidth, and cluster topology to maximise ROI and avoid wasted cloud spend.

- Emmanuel Ohiri

Resources

Real-world benchmarks demonstrating performance variances across different GPU cloud infrastructures

The H100 reduces training costs, and the L40S beats A100 on inference $/token efficiency—real-world benchmarks reveal where each GPU maximises

- Emmanuel Ohiri

Deep learning

Resources

Ensemble learning combines the strengths of different algorithms to achieve greater accuracy and solve complex problems.

- Emmanuel Ohiri

Resources

Compare top LLMs and AI orchestration tools to build fast, scalable, cost-efficient AI systems tailored to performance and business needs.

- Emmanuel Ohiri

Resources

It is important to strike the perfect balance between too much and insufficient learning in your machine-learning models. We explore

- Emmanuel Ohiri

Resources

A single checkpoint of a large AI model can require hundreds of gigabytes. Multiply that by frequent saves and large

- Emmanuel Ohiri

Resources

Read our breakdown of the costs of training large language models on Cloud GPUs, highlighting key budgetary considerations and cost-efficiency

- Emmanuel Ohiri

Resources

AI is everywhere, but how does it make decisions? Explainable AI (XAI) sheds light on the black box of AI.

- Emmanuel Ohiri

Deploy production-scale enterprise AI

Secure GPU infrastructure aligned to NVIDIA Enterprise Reference Architecture, delivered with the power, engineering and operational support required for production AI deployments.