Investing in AI infrastructure has shifted from being a strategic option to a business necessity. Budgets for AI are skyrocketing, and this trend shows no signs of slowing. Yet, as investments grow, so does the risk of overspending, with surveys revealing that the majority of CIOs have already exceeded their cloud budgets.

The drivers behind these overruns are complex and often hidden. Training frontier models is exponentially expensive, while thelong-term costs of renting GPUs in the cloud can far exceed initial estimates. Organizations are discovering that the compute footprint of modern AI is far heavier than anticipated. On top of this, logistical strains—from electricity demand that threatens power grids to the billions of gallons of water required to cool data centers—add new layers of financial and operational risk.

Complicating matters further is a lack of governance and cost visibility. Many enterprises struggle to track ROI or fully attribute spending across teams. Without strategic oversight, investments in AI infrastructure quickly spiral into uncontrolled expenses.

With so much at stake, designing AI infrastructure that is both scalable and cost-effective is no longer a luxury—it’s a requirement for survival. This article will show you how to build a robust AI infrastructure that supports innovation and growth without overspending.

Factors to consider before designing AI infrastructure

One of the biggest risks in scaling AI is financial waste. Overspending has become the norm rather than the exception, with surveys showing that more than two-thirds of CIOs have overspent on their budgets. The average overrun sits between 15% and 30%, underscoring just how difficult it is to predict costs in advance. Governance and cost visibility must be built into infrastructure decisions from the very beginning.

Equally important is accountability. While many organizations have introduced FinOps teams to monitor cloud and AI spending, these teams are often situated away from the engineers and architects making day-to-day decisions. Without embedding cost awareness into the development process, overspending becomes inevitable. The organizations that succeed are those that align financial discipline with technical execution.

A second key decision is where to run your AI workloads. Public cloud offers speed and flexibility, making it ideal for experimentation and rapid scaling. But for predictable, high-volume workloads, on-premise or hybrid approaches often deliver better cost control and long-term ROI. Industry research shows that most enterprises are now moving toward hybrid models, combining cloud agility with on-prem stability to achieve balance. This shift is also driven by regulatory requirements, data sovereignty concerns, and the need to reduce vendor lock-in.

| Pillar | Strategic Consideration |

|---|---|

| Flexibility vs. Cost | Cloud offers unmatched agility for experimentation, but predictable workloads may benefit from on‑prem cost stability. |

| Regulatory & Security | On-prem or private cloud can provide tighter control over sensitive data and compliance-critical workloads. |

| Vendor Lock-in Risks | Proprietary tools and platforms can limit future flexibility—hybrid strategies help mitigate lock-in. |

| Performance & Latency | Real-time or latency-sensitive applications may require co-located on-prem or edge deployments. |

| Sustainability & Resource Constraints | Infrastructure choices have implications beyond finance—energy, water usage, and environmental impact should be factored into scaling decisions.) |

Finally, you need to weigh trade-offs beyond cost. Latency-sensitive applications may demand local or edge deployments, while sustainability pressures are pushing organizations to consider the energy and water footprint of their infrastructure. Every choice—cloud, on-prem, or hybrid—has ripple effects on compliance, security, and long-term competitiveness.

Read more here: AI infrastructure budgeting template: Plan your costs effectively.

AI infrastructure decisions are not just operational—they are strategic. Choosing the wrong model or neglecting governance can erode margins, stall innovation, and even create regulatory exposure. Choosing wisely can unlock speed, agility, and lasting advantage.

Framework for designing scalable, cost-efficient AI infrastructure

Designing AI infrastructure is not just about picking servers or signing a cloud contract—it’s about aligning technology decisions with business strategy. Without a guiding framework, you risk chasing short-term gains that lead to long-term overspending and inflexibility. To avoid this, you can anchor your infrastructure planning around five pillars:

1. Align with business objectives: Every infrastructure choice should map directly to a business outcome. Are you building AI models to speed up product launches, reduce costs, or unlock new revenue streams? The answer determines whether you need a high-performance cluster for rapid innovation or a more cost-conscious setup that prioritizes stability. Those who tie infrastructure to key performance indicators (KPIs) can justify spending while ensuring that resources aren't wasted on low-impact experiments.

2. Right-size early, scale wisely: Overprovisioning is one of the biggest drivers of wasted spend. A more innovative approach is to start small—using smaller models, cost-effective cloud instances, or shared resources—then scale only when workloads prove their value. A hybrid budgeting model, mixing capital expenditure (CapEx) for stable workloads with flexible operating expenditure (OpEx) for experimental needs, is highly effective here. This approach helps control unseen expenses that can silently inflate costs, such as idle GPU reservations, network egress fees, and redundant storage.

Read more: AI infrastructure budgeting template: Plan your costs effectively.

3. Build in flexibility: Vendor lock-in is one of the most dangerous traps in AI infrastructure. Proprietary services may look attractive at first, but can limit your options, slow innovation cycles, and inflate costs down the road. Industry data suggests a vast majority of IT leaders now believe no single cloud provider should control their entire stack, often citing prohibitive egress fees and infrastructure inflexibility as key inhibitors. A flexible design—such as a hybrid or multi-cloud strategy—is insurance against both technical and financial rigidity.

Read more: Why AI teams need cloud infrastructure without vendor lock-ins.

4. Monitor and optimize continuously: AI infrastructure is not something you set and forget as workloads evolve, models grow, and costs can spike unexpectedly. Continuous monitoring—through dashboards, utilization tracking, and FinOps practices—gives decision makers real-time visibility into spending.

Effective strategies include lifecycle cost modeling, which forecasts expenses from initial R&D spikes to the long tail of production inference, allowing you to proactively decide where fixed CapEx makes sense and where flexible OpEx models deliver more control, ensuring governance is proactive, not reactive.

5. Future-proof for growth: The AI landscape is advancing at breakneck speed. A future-proof strategy ensures that today’s investments can evolve with tomorrow’s demands. This means being ready to adopt next-generation, energy-efficient hardware, using globally distributed GPU clouds to reduce latency, or meeting new regulatory and sustainability mandates. Thinking ahead will help you avoid the costly cycle of ripping and replacing infrastructure every few years.

Here is a simple business-aligned framework that increases your scalability:

| Pillar | Strategic Advantage |

|---|---|

| Business Alignment | Ensures infrastructure delivers measurable value |

| Right-Sizing & Scaling | Prevents premature overspend via hybrid CapEx/OpEx models |

| Built-in Flexibility | Avoids lock-in and preserves agility in dynamic markets |

| Continuous Optimization | Catches inefficiencies early with FinOps alignment |

| Future-Proofing | Supports growth, sustainability, and next-gen hardware adoption |

Together, these five pillars form a practical framework that you can use to evaluate every infrastructure decision. The goal is not just to support current workloads, but to build a foundation that scales well, adapts to change, and protects the bottom line.

Cost-optimization strategies to use when designing AI infrastructure

Designing scalable infrastructure is only half the battle—the real test is ensuring costs don’t spiral as workloads grow. While engineers focus on model accuracy and deployment, you must master the financial levers that keep AI sustainable. Here are five cost-optimization strategies every decision maker should know:

1. Negotiate smarter cloud contracts

Cloud costs are not fixed—they are negotiable. Long-term commitments and reserved instances can cut expenses by 40–60%, while short-term “spot” or preemptible instances can reduce costs for non-critical training workloads.

Yet, the real opportunity lies in balancing the two: using reservations for steady workloads and spot for bursty experiments. According to Gartner, 94% of enterprises overspend on cloud due to misaligned provisioning, making contract optimization a top priority.

Read more: AI training: Costs of GPU cloud infrastructure.

2. Adopt FinOps as a cultural discipline

FinOps is not just an operations model—it’s a cultural shift that embeds cost awareness into engineering. Research shows organizations that adopt FinOps can achieve 20–30% cloud savings by enforcing real-time visibility and shared accountability between finance and IT. For executives, this means championing cost as a performance metric alongside speed and accuracy.

3. Optimize workload placement

Not every AI task warrants the use of a GPU. For many smaller models or low-throughput inference workloads, CPUs can offer lower cost and greater energy efficiency—especially when optimized carefully.

Beyond hardware choice, dedicated inference accelerators (e.g., NPUs or ASICs) provide competitive performance using low-precision arithmetic (8‑bit or 16‑bit), reducing both memory demands and energy consumption without compromising response quality.

Keep in mind that training and inference differ fundamentally. Training is compute-intensive but episodic; inference is continuous and cumulative. Since inference often dominates operational cost, it pays to optimize inference hardware first.

Smart placement also means mixing hardware types strategically:

- Using heterogeneous systems, such as CPU–GPU hybrids, can deliver huge gains, as experiments show up to a 317% inference speedup when workloads are distributed intelligently.

- Even within GPU fleets, systems like Mélange optimize cost and placement by selecting GPU types tailored to your service’s request patterns, yielding cost reductions up to 77% in some LLM inference settings.

Finally, GPU utilization matters. Running inference for streaming services during the day and shifting to batch training at night can significantly increase utilization—reaching 60–85%—and dramatically improve your ROI.

Save costs and conserve energy by aligning hardware to workload. Use CPUs or accelerators for light inference, deploy heterogeneous systems for balanced performance, and maximize GPU use through smart scheduling and placement.

4. Embrace model efficiency techniques

Techniques like pruning, quantization, and mixed-precision training unlock dramatic reductions in compute and memory without compromising accuracy. For instance, modern approaches that jointly apply pruning and mixed-precision quantization delivered up to 69% smaller models at equal accuracy and with faster search and training.

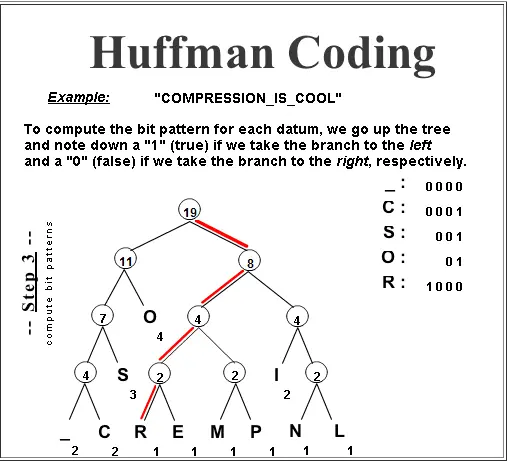

The classic Deep Compression strategy combined pruning, quantization, and Huffman coding to shrink model size by 35 to 49 times, while boosting speed 3–4 times and energy efficiency 3–7 times.

Source: Mathematica

Mixed-precision training itself can lead to 53% faster training and 50% less memory use, with no accuracy loss. Tools like PyTorch’s automatic mixed precision (AMP) package and modern GPUs—especially those with Tensor Cores—make this approach both accessible and impactful.

On the innovation front, activation-density–based mixed-precision techniques have shown around 4.5 times reductions in compute, 50% training complexity drop, and, when paired with pruning, 198 times energy savings on specialized hardware for VGG19, and 44 times for ResNet18.

Investing early in model efficiency—through pruning, quantization, and mixed precision—can dramatically cut GPU hours and energy use, saving millions in operational cost downstream.

5. Schedule and automate cost controls

One of the most overlooked—but easiest—cost-savings levers is shutting down what you’re not using. For non-production workloads, scheduling automated shutdowns during off-hours often cuts those systems’ spend by 20–30%.

But automation doesn’t stop there. Across comprehensive automation frameworks—combining real-time monitoring, rightsizing, intelligent scaling, and shutdowns—some organizations report reductions in cloud spend of up to 50% compared to manual cost management.

Cost optimization is not about doing less with AI—it’s about doing more with discipline. By combining smarter contracts, cultural adoption of FinOps, workload placement, model efficiency, and automation, leaders can dramatically extend the impact of every dollar invested.

Decision maker’s checklist

Building scalable AI infrastructure without overspending is a complex challenge, but the right questions can simplify it. This checklist is designed as a quick reference for executives—a tool you can use in boardrooms, budget reviews, or strategic planning sessions:

| Category | Key Questions for Decision Makers | Why It Matters |

|---|---|---|

| Strategic Alignment | – Is every infrastructure investment tied to a measurable business outcome? – Are ROI metrics clear and agreed upon across finance & engineering? | Prevents misaligned spending and ensures AI directly supports business goals. |

| Financial Discipline | – Do we monitor AI/cloud costs weekly, not quarterly? – Do we balance CapEx (stable workloads) vs. OpEx (flexible workloads)? | Keeps budgets under control and aligns costs with workload predictability. |

| Infrastructure Choices | – Are workloads right-sized before scaling? – Do we have a hybrid or multi-cloud strategy? – Are GPUs used only where necessary? | Reduces overspending and avoids vendor lock-in. |

| Operational Controls | – Are idle clusters monitored and automatically shut down? – Do we track hidden costs (data egress, redundant storage)? – Are we running lifecycle cost models? | Catches inefficiencies early and prevents runaway costs. |

| Future Readiness | – Are we preparing for new regulations and sustainability mandates? – Do we have a plan for adopting next-gen, energy-efficient hardware? – Are environmental costs (energy, water) factored in? | Ensures investments remain viable as technology, markets, and compliance evolve. |

Quick-reference callouts

Top 3 cost traps to watch:

- Idle GPU reservations that silently burn budget.

- Data egress fees from single-vendor cloud strategies.

- Inference costs often outweigh training costs by 5–10× over time.

Top 3 levers for savings:

- Spot/preemptible instances for non-urgent workloads.

- Mixed-precision training and model optimization.

- Automated shutdown policies for unused compute.

Rule of thumb: If an AI workload doesn’t clearly support business KPIs, isn’t right-sized, or isn’t being monitored, it’s costing more than it should.

This checklist isn’t just a reminder—it’s a guardrail. Executives who revisit it regularly will keep AI infrastructure aligned with strategy, financially disciplined, and flexible enough to scale without draining budgets.

Scaling smart by spending wisely

AI is no longer optional—it is a defining factor in who wins and who falls behind. Yet the evidence is clear that most organizations overspend, waste resources, and struggle to align infrastructure with strategy. The cost of doing nothing, or doing the wrong thing, is not just financial waste—it’s lost competitive advantage.

You face a choice to either treat AI infrastructure as a strategic investment or risk being outpaced by smarter, leaner competitors. The path forward is not about spending more—it’s about spending wisely. Align with business goals, scale deliberately, embed financial governance, and future-proof every decision. That is how enterprises unlock AI’s potential without jeopardizing profitability.

CUDO Compute’s GPU clusters provide enterprise-grade performance without vendor lock-in, offering globally distributed, cost-efficient access to the compute power modern AI demands. Get started with our self-serve clusters with just a few clicks. Get started now.

Resources

Resources