AI FACTORY SOLUTIONS

Infrastructure for

industrial scale AI

AI factories designed, built and operated for production AI workloads, engineered for rapid activation, predictable timelines and enterprise scale execution.

Engineered for production AI

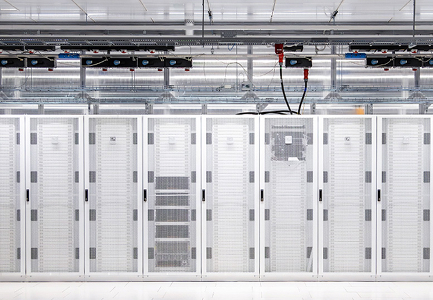

Dedicated and managed GPU infrastructure for training and inference, designed around power, networking, storage and operational control.

Cluster scale

From dedicated enterprise deployments to large multi-rack GPU environments.

Interconnect and storage

InfiniBand or Ethernet, plus high-performance storage architecture.

Operations model

24/7 monitoring, firmware lifecycle, incident response and vendor escalation.

Deployment models

Choose the deployment route that fits your control requirements, commercial model and time to production.

Dedicated AI clusters

Single-tenant GPU environments for training and inference, with isolated resources, defined operating boundaries and predictable performance for production AI workloads.

Customer and partner deployments

Customer-owned or partner-backed infrastructure, designed, deployed and operated by CUDO to accelerate time to production without compromising control or performance.

Delivery advantages

Power-first infrastructure

Sites are secured and engineered for density, cooling, layout and expansion before deployment begins.

Supply certainty

Capacity is secured through strategic land, power and infrastructure partnerships, supporting large scale deployments with predictable timelines.

Production architectures

Systems are designed and deployed against NVIDIA reference patterns to support stable production performance.

End to end delivery

Infrastructure is delivered from site readiness through cluster deployment and ongoing operations under one accountable model.

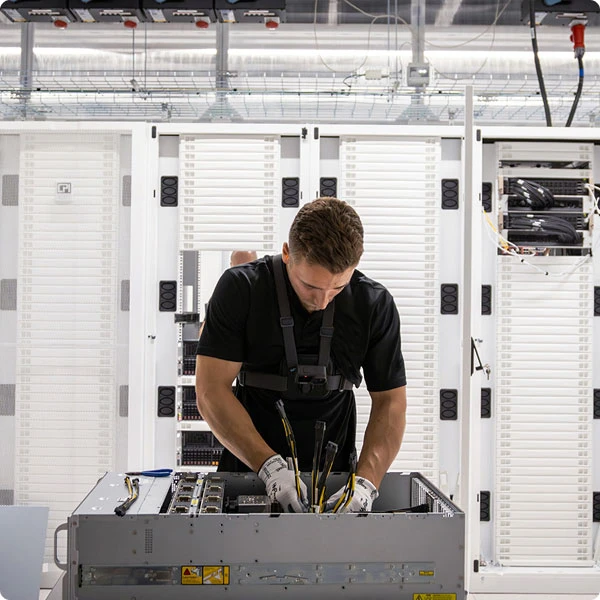

Engineering execution

Teams with deep HPC and data center experience oversee deployment, optimization and long term operational stability.

Production reliability

Environments are continuously monitored, maintained and supported to sustain performance and uptime.

Commercial alignment

Infrastructure delivery models support OPEX based consumption, predictable spend and long term planning across hardware generations and multi-site environments.

Security and sovereignty

by design

Single tenant or operator controlled clusters with complete physical and logical isolation.

Built in Tier III+ ISO 27001 and SOC 2 certified facilities.

Dedicated fibre interconnects for data residency across EU and UK jurisdictions.

Data encrypted at rest and in motion with customer-owned keys.

24/7 staffed security, biometric MFA and zero trust network design.

How we deliver

We focus on activation as well as allocation, ensuring GPU capacity is deployed, performant and ready for production.

Design

NVIDIA reference-aligned architectures validated for training and inference. Cluster design covering InfiniBand fabrics, high-performance storage (VAST, Weka, DDN), power, cooling and rack layout.

Deploy

Secured NVIDIA systems through established OEM channels. Power-ready European sites with confirmed timelines. Hardware delivery through Dell, Lenovo, Supermicro and HPE partnerships.

Run

Foundational SRE as standard, not an add-on. 24/7 monitoring, incident response, firmware management and NVIDIA escalation paths. Clusters enter production in a stable, reference-aligned state.

Operating at global enterprise scale

CUDO Compute operates across ISO 27001-certified facilities in North America, Europe, the UK and MENA, supporting enterprise AI infrastructure at global scale

Security and compliance

ISO 27001 Information Security

ISO 14001 Environmental Management

GDPR-aligned operations

Sovereign data residency enforcement

Capacity pipeline

250MW+ contracted by end 2026

750MW+ targeted by end 2027

Multi-GW pipeline including exclusive European sites

Book a strategy session

Connect with our AI Factory team to discuss tailored deployments, architecture timelines and commercial models designed for sovereign-scale infrastructure.