All posts

CUDO  Resources

Resources

GPU pricing is still the cost most operators plan around, but it is no longer the deciding factor in whether

Resources

Data center power density is approaching a thermodynamic limit, with average rack power doubling to 17 kW in two years,

- Emmanuel Ohiri

Resources

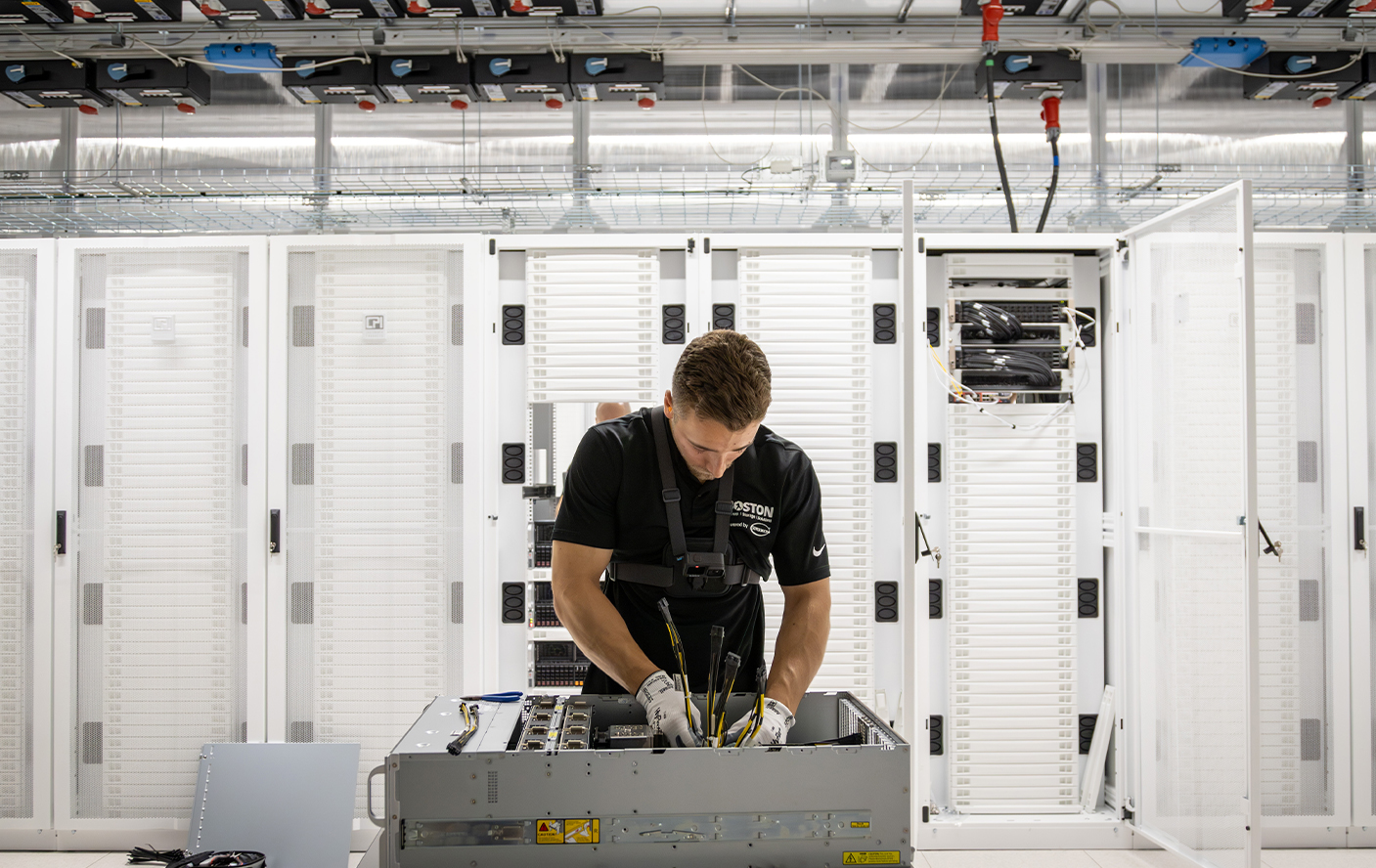

Hardware bottlenecks strand expensive compute. We detail the precise site readiness, cooling, and storage configurations needed to scale AI racks

- Emmanuel Ohiri

Resources

Power is no longer a background variable in AI infrastructure. It is a first-order constraint that sets the ceiling on

- Emmanuel Ohiri

Global data center capacity will nearly triple by 2030, with AI driving most demand. Traditional infrastructure planning no longer works

- Emmanuel Ohiri

Resources

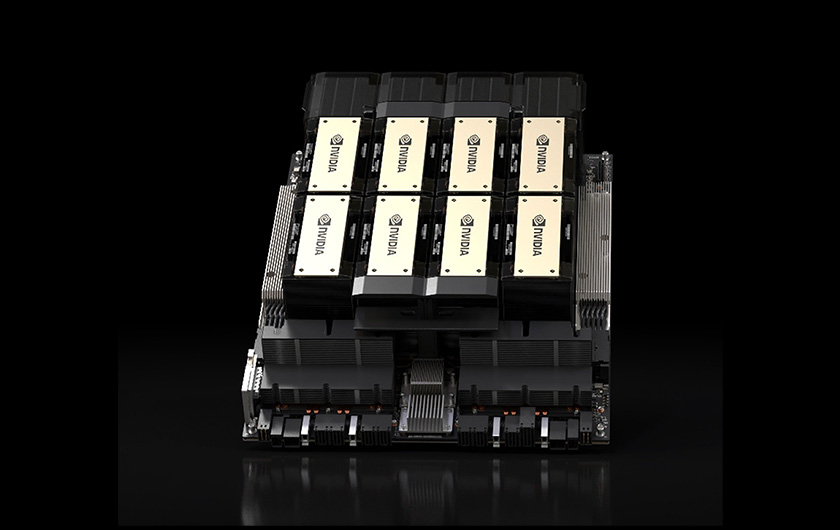

Read the comprehensive comparison between NVIDIA's H100 and H200 GPUs. Discover the expected improvements and performance gains for AI and

- Emmanuel Ohiri

Resources

NVIDIA introduced a pivotal breakthrough in AI technology by unveiling its next-gen Blackwell-based GPUs at the NVIDIA GTC 2024.

- Emmanuel Ohiri

Resources

Ensemble learning combines the strengths of different algorithms to achieve greater accuracy and solve complex problems.

- Emmanuel Ohiri

Deploy production-scale enterprise AI

Secure GPU infrastructure aligned to NVIDIA Enterprise Reference Architecture, delivered with the power, engineering and operational support required for production AI deployments.