All posts

CUDO  Resources

Resources

Learn how to optimise architecture fit, memory bandwidth, and cluster topology to maximise ROI and avoid wasted cloud spend.

Resources

Real-world benchmarks demonstrating performance variances across different GPU cloud infrastructures

The H100 reduces training costs, and the L40S beats A100 on inference $/token efficiency—real-world benchmarks reveal where each GPU maximises

Emmanuel Ohiri

Resources

A 2025 survey found 88.8% of IT leaders believe a single cloud provider shouldn’t control their entire stack. Learn how

Emmanuel Ohiri

Resources

Compare NVIDIA's A100 and H100 GPUs. Discover which is preferable for different user needs and how both models revolutionize AI

Emmanuel Ohiri

It is important to strike the perfect balance between too much and insufficient learning in your machine-learning models. We explore

Emmanuel Ohiri

Resources

Explore the economics of renting cloud GPUs. Compare pricing models, GPU types, and solutions like bare metal and clusters for

Emmanuel Ohiri

Resources

GPU-dense clusters demand grid-to-rack engineering. Covers power, cooling, layout, and water systems for AI-ready facilities.

Emmanuel Ohiri

Resources

A single checkpoint of a large AI model can require hundreds of gigabytes. Multiply that by frequent saves and large

Emmanuel Ohiri

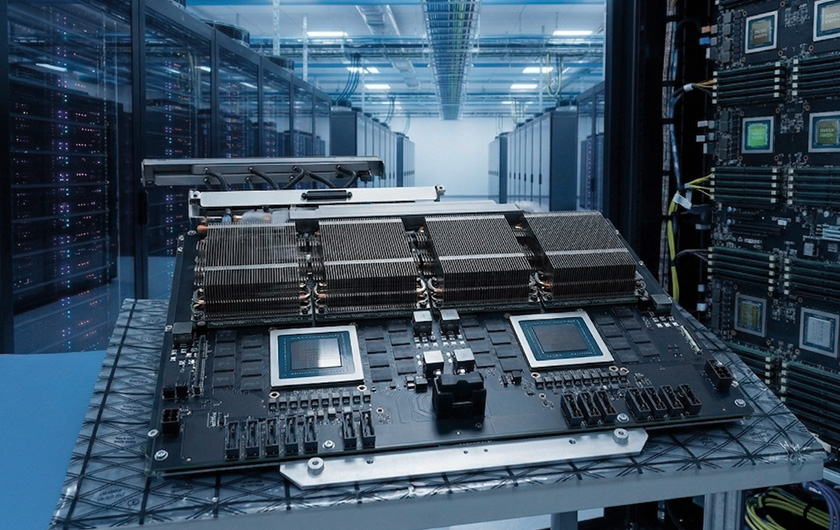

Deploy production-scale enterprise AI

Secure GPU infrastructure aligned to NVIDIA Enterprise Reference Architecture, delivered with the power, engineering and operational support required for production AI deployments.